This week I attended a full-day workshop on parallel computing in Julia offered by WestGrid, a regional partner of Compute Canada, a leader in advance research computing systems.

I have no parallel computing experience coming into this intensive workshop. On top of that, I am a bloody beginner in Julia, who needs to master the language and the basics of parallel computing within a short time span. I need this for the undergraduate physics summer research on artificial spin ice systems.

In this post, I would like to reflect on how helpful this kind of workshop can be (or not). It feels like I begin learning Julia backward, LOL. Instead of working my way up, I tackle it from one of the more advanced topics and dig down to the basics. But hey, it’s the purest form of active learning there is (which I am a big advocate for)!

Pre-requisite knowledge.

Know how to use the terminal. This is needed to communicate with the remote cluster, submit your code for execution, and get back the computation results. I am on an Apple M1 MacBook Pro. So my terminal is a –zsh shell. I just recently learned how to use the terminal in the last month or so.

Familiarity with working in the cluster environment, in particular, with the Slurm scheduler. This is a job management software that helps to schedule your job submissions and defines how you want to run your code: e.g., it lets you specify the number of computer nodes to use, the number of cores you want, and a whole bunch of other stuff. To submit a job to the cluster, you have to have two things: a working Julia code and a short Slurm job submission script. The submission script tells the Slurm scheduler which files to execute, where and how to execute them. The whole point of using the cluster for your computations is the computational power that it gives you. As I learned, some of our Julia scripts for artificial bilayer spin ice structures simulations need on average 7-9 days to complete one round of calculations. You probably would not want to run this on your personal computer.

In the past month, I spent quite some time trying to learn about how to submit jobs to the cluster. However, there was a lot of confusion about the parameters I needed to specify in the job submission scripts to ensure the code runs properly. This course on parallel Julia definitely cleared up some things for me! And there is also a course on HPC computing offered by WestGrid, which was very helpful.

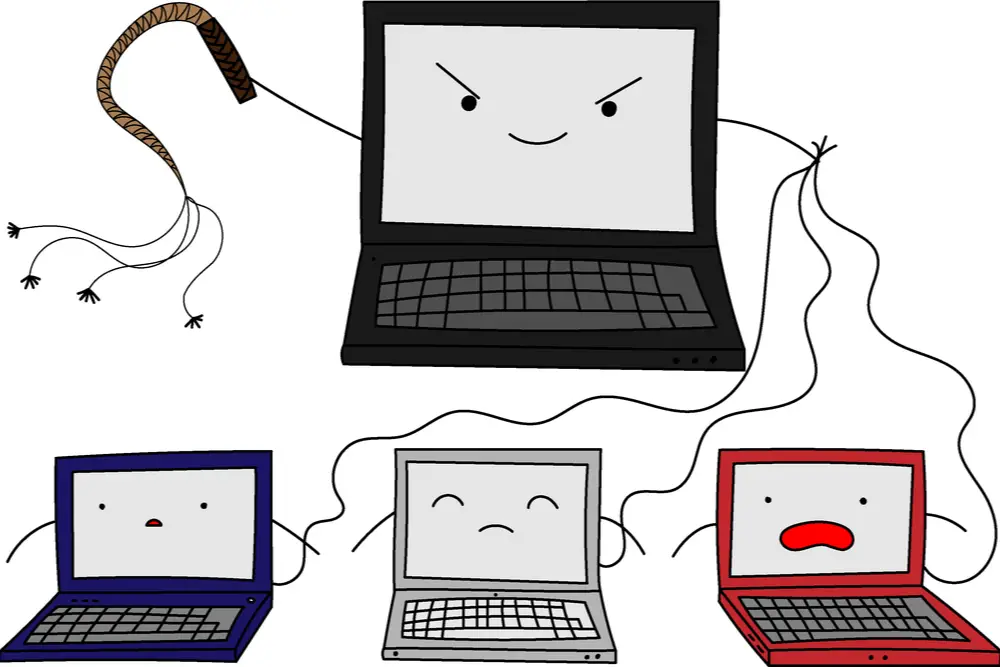

Some basic knowledge of serial Julia. Ideally, we want to run many simulations to gather more data to analyze. So we want to be able to parallelize the code to shorten the simulation run time. Julia has a few modules that let you convert your regular serially executed code into a parallel Julia code (or one could write it as parallel code from the beginning). This was the main focus of this course: to learn how to use those modules and how to properly parallelize the code and take advantage of the cluster’s computing power.

Topics covered.

In this course I learned the following topics.

- Processes vs. threads (multi-threading vs. multi-processing).

- Multi-threading (always limited to shared memory within one node with multiple cores).

- How to specify the number of threads to use.

- How to parallelize the code using

@threadsand@spawnmacros. - How to write Slurm scheduler job submission scripts for multi-threaded jobs.

- Multi-processing: parallelizing with multiple Unix processes (MPI tasks). This is what allows to utilize more than one node on the cluster. Multi-processing can be in shared memory (one node, multiple CPU cores) or distributed memory (multiple cluster nodes).

- Distributed .jl package

- DistributedArrays.jl

- SharedArrays.jl

- How to write Slurm scheduler job submission scripts for multi-processes jobs.

How the course was structured.

The course consisted of two three-hour-long interactive sessions with one hour break in between. It was fast-paced, but If you knew how to use the basic tools described earlier, it wasn’t that bad.

There was some theory discussion along with the demonstration of the concepts. And we also considered a couple of serial Julia codes (like the N-body problem solution) and parallelized them in a few different ways. We then timed the execution time to compare the possible speed-up.

What I learned in one day.

Considering that I knew nothing about writing parallel Julia code before attending this workshop, I feel like I learned a lot. It definitely helped to have at least some basic knowledge about Julia syntax and the Slurm job scheduler. I also clarified many things about how to write the Slurm job submission scripts for different parallelization techniques. I finally understood what multi-threading and multi-processing are and how those two concepts differ from each other. This will make it easier to decide how to structure and write the Julia code needed for our simulations.

Now it’s time to get to practice my newly acquired knowledge and write some code!